Financial services are entering a new phase, one where AI doesn’t just analyze data, but securely interacts with regulated financial infrastructure in real time.

At Infinum, we are fully aware of the challenges and considerations involved in connecting a ChatGPT App to live banking data. Using Model Context Protocol (MCP) and PSD2 open banking APIs, we have enabled users to authenticate once and then query balances, transactions, and spending summaries in a conversational manner, all without leaving ChatGPT.

This post will walk you through the architecture, how authorization works, and how users can retrieve live banking data directly inside Chat GPT. We’re also going to talk about the design decisions and limitations that came up when building it.

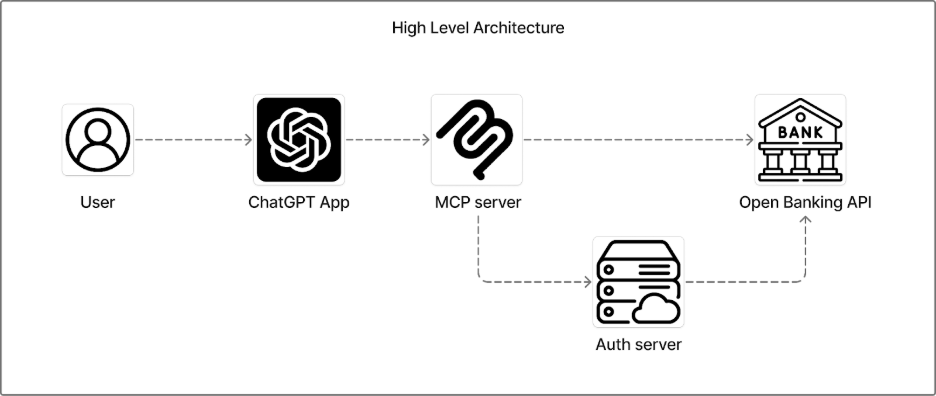

A Glance at the Architecture

The system is built from four components. Understanding how they relate to each other is the starting point for everything else — including why the security model works the way it does.

| Component | Role |

| Open Banking API provider | Source of account, balance, and transaction data via PSD2 |

| Auth server (OAuth 2.1) | Handles user consent and issues access tokens |

| MCP server | Defines tools, enforces access control, owns the audit trail |

| ChatGPT App | User-facing interface; connects to MCP and auth endpoints |

The ChatGPT App configuration is deliberately minimal: a public MCP server URL and an OAuth enabled flag. Everything else, auth logic, access control, data scoping, lives server-side in the MCP layer. That separation is a feature, not an oversight, and it shapes how we handle authentication and permissions.

OAuth and PSD2 Scopes

This layer is critical for protecting access to sensitive financial data. To achieve this, we use OAuth 2.1, the industry standard authorization protocol. Once the user successfully authenticates and grants explicit consent, the provider issues a secure access token. This token allows our system to retrieve only the permitted banking data (such as balances or transactions) through the provider’s APIs.

When initiating the OAuth flow, we explicitly declare which PSD2 permission scopes the token should cover. Depending on the action the user want to make In our implementation, we request only three:

- Accounts – account metadata (number, currency, institution)

- Balances – current and available balance

- Transactions – transaction history

Narrower scopes mean a cleaner consent screen and a smaller blast radius if a token is compromised. This maps directly to the principle of least privilege: request only what the feature actually needs.

90-day re-authentication (PSD2 requirement)

PSD2 mandates re-authentication every 90 days. Our implementation satisfies this by design: tokens are short-lived and tied to individual sessions. Users re-authenticate each time they start a new session, which in practice happens far more frequently than every 90 days. No special tracking or forced re-auth flow is needed.

What happens when a user withholds a scope? Currently, the initial token grants all three read scopes together. We don’t issue per-resource tokens. If a scope is not granted, we simply don’t return that data. We don’t surface an error or attempt to call the API without permission.

Taken together, this approach mirrors the security model used by many mobile banking and FinTech applications, where OAuth based authorization ensures that access is controlled, permission-based, and revocable at any time.

But once a user is authenticated, how does ChatGPT know which banking actions it can perform on their behalf?

That’s where the MCP server comes into play.

MCP Server: Tool Definitions as the Security Boundary

This is where access control lives. Every capability the model has is an explicitly defined tool. There is no freeform API access, if a tool for initiating payments doesn’t exist, the model has no mechanism to trigger one. The tool definitions are the boundary.

We’ve specified a list of actions and what each action does:

- Checking if a user is authenticated

- Listing balance information

- Listing transactions

- Fetching UI components, etc.

The Auth Check Gate

The `auth-check` tool acts as a gate for all other tools. Before any data-fetching action is invoked, the model is instructed to first verify that the user has an active, valid session. This dependency is structural: in the tool descriptions themselves, we explicitly specify that balance, transaction, and account tools should not be called unless the auth-check has returned a confirmed authenticated state. The model cannot bypass this because it has no alternative path to the data.

The Audit Trail

Every tool invocation is logged at the MCP server level, producing a structured audit trail.

Each log entry captures:

- tool name,

- timestamp,

- input parameters passed by the model,

- response returned.

This gives us a complete operational record for debugging and figuring out if the model is calling the right tools.

The UI Components

UI components are served through the MCP layer and have no independent access to provider APIs. UI components are served through the MCP layer and have no independent access to provider APIs. When a component needs user-specific data (a balance card, a transaction list), it receives a pre-fetched payload passed through the tool response that triggered it. The JWT token and granted scopes are held server-side and never forwarded to the component directly. The component renders only what the MCP server explicitly provides.

The entire implementation is based on the new Apps SDK.

| A note on Infobip’s model The same MCP pattern applies to Infobip’s communication infrastructure, SMS, WhatsApp, RCS, Voice, Viber, 2FA. Each channel is exposed as a dedicated MCP tool with its own scope and constraints. The enforcement mechanism is identical: tools as the boundary, least-privilege by design. |

Provider Agnosticism

One decision worth calling out explicitly: the architecture is Open Banking provider-agnostic.

Without an Open Banking API provider the system cannot function. The whole idea is dependent on the providers. All banking information is provided by them, so we need to connect with such a provider and extract the information in a secure way.

The MCP server communicates with the provider through a standardized PSD2 interface, which means the underlying provider can be swapped without changes to the MCP layer or the ChatGPT App.

In practice, providers vary in how faithfully they implement the PSD2 spec, so some adaptation at the integration layer is expected. But the core architecture has no single-provider dependency baked in.

Dynamic Client Registration (DCR)

Since we integrate through a single Open Banking API provider rather than directly with individual bank authorization servers, DCR is handled at the provider level. It’s not something our architecture needs to address directly.

LLM Limitations Worth Knowing

With the architecture in place, there are a few model-level constraints that affect how far the current implementation can stretch.

Rapidly changing financial data (exchange rates, stock prices)

The model doesn’t have live financial context by default. The correct approach is a dedicated MCP tool that fetches current data before any function that needs it. We haven’t implemented this yet, but the pattern is straightforward: add a get-market-context tool and instruct the model to call it first.

Local regulation and terminology outside the training set

For the use cases this app targets — consumer-facing, non-specialist — the base model’s training data has been sufficient. For regulated or jurisdiction-specific deployments, a RAG layer or a context-injection tool would be the right extension point.

Keeping Financial Data Out of the LLM Context

Beyond model limitations, there’s a broader question about data handling that’s directly relevant to PSD2 compliance.

We control what we can on our side: MCP tools return lean, structured payloads (not raw API objects), and no financial data is stored server-side beyond the lifetime of the request. What happens inside OpenAI’s platform is governed by their data policies, and those policies vary by account type.

For any financial use case operating under PSD2, users should access the app through a ChatGPT Team or Enterprise account, or at minimum disable training in their data controls settings.

The End-Result: Conversational Open Banking

Once a user connects their bank account through the OAuth flow, they can interact with their financial data conversationally inside ChatGPT.

Instead of opening a banking app and navigating through menus, the user can ask:

- “What’s my current balance?”

- “Show me my last 10 transactions.”

- “How much did I spend on subscriptions this month?”

- “Do I have any incoming payments this week?”

The experience feels like messaging a financial assistant who already understands your question and knows where to look. Behind the scenes, everything remains secure and permission based, but from the user’s perspective, it’s effortless.