It usually starts the same way: a ticket lands, logs are opened, and someone with just enough context begins retracing a path that has likely been walked dozens of times before. Developer support, for all its complexity, is still mostly a repetitive task. At Infobip DevDays, two engineers showed how a Claude-based AI system using MCP can resolve tickets automatically in seconds.

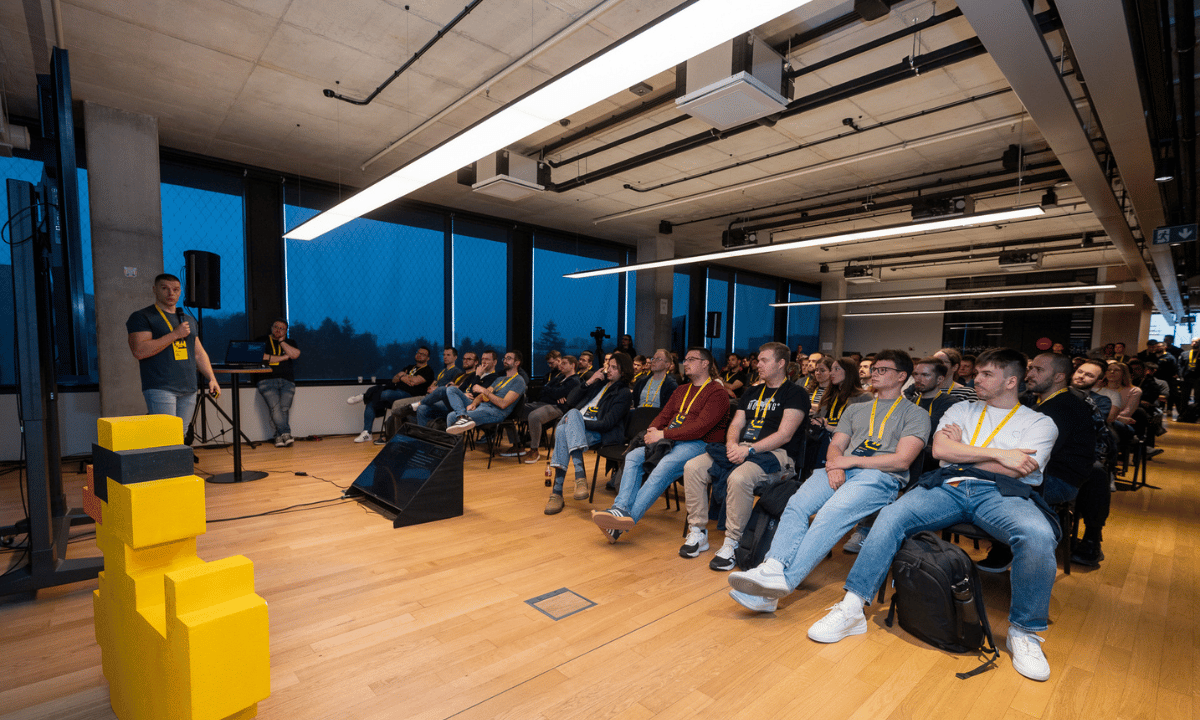

The lecture “Claude in the Loop: Automating Developer Support Workflows” at DevDays was a direct answer to the ticket issues engineers are facing today. Taking place at the Alpha Centauri Campus in Zagreb, Croatia, DevDays is Infobip’s own developer conference where new ideas are shared with over 1000 engineers and visitors.

Luciano Peranni and Frane Jelavić, the engineers behind the system, explained how they set up this process, aimed at helping developers.

Before introducing any model into the loop, they documented how troubleshooting actually works right now, in practice.

This meant breaking down several real support cases into concrete steps. These include straightforward moves such as understanding the ticket, fetching configuration, validating data, inspecting logs, and correlating changes. What was created was a map of knowledge; the kind that lives in your head and disappears when you leave work.

A simple “working hours” issue illustrated this point. On paper, it’s a basic configuration check but in reality, it requires you to know how data moves between multiple systems. This kind of knowledge is tough to scale without some form of structure, but it’s very easy to automate once you set everything up.

Don’t Go Around Automating Everything

The key design decision was to split the problem instead of going all-out on automation. Deterministic steps, database queries, log retrieval and system calls remain in the domain of traditional services. The AI model, in this case Claude, is then as a reasoning layer. This is the part that understands context and then selects the right part, basically connecting the dots.

To make this safe and usable, Luciano and Frane’s team built a Model Context Protocol (MCP) layer. Instead of exposing raw infrastructure, MCP provides a set of actions the model can invoke: fetch configuration, read conversations, inspect logs, and identify changes. This layer of abstraction enforces boundaries, simplifies integrations, and makes the system accessible even to non-engineers.

The system first parses the ticket, then classifies it and delegates it to a specialized agent, and lastly starts gathering evidence. To make sure everything is right, the model then checks configurations, scans logs, and correlates events. The difference is mainly speed, but also consistency. What might take minutes, or longer during an on-call shift, can be resolved in seconds and engineers are left with a structured report explaining what happened.

In one case, the system identified that a configuration change happened just one minute after a conversation was created. That detail both explained the issue and answered the question of who made the change before it was asked.

Catch it Before The Alert Fires

The same pattern extends into observability. Alerts, especially noisy ones with a lot of information, are perfect candidates for automation.

Instead of waking someone up in the middle of the night to manually triage a spike in errors, the system will pars the alert and find out the context, making a decision on its own.

What makes this interesting is the judgement. In one example, the system concluded that an alert was a false positive caused by zero traffic during off-hours. This resulted in a quiet resolution of the issue with no need for more work.

The bigger shift, however, is moving from reactive to proactive. By continuously scanning logs and patterns, the system can detect issues before they trigger alerts. In practice, this means identifying anomalies (like message loss) before they impact users or breach thresholds. It’s a subtle shift, from firefighting to prevention.

The architecture supports this evolution. It starts small, with clearly defined use cases, and grows by adding specialized agents. Each new scenario gets the same treatment: map it, structure it, hand it off. Over time, the system becomes a shared layer of intelligence across the team.

The result is a different distribution of knowledge, with faster support being there almost as a side effect. Instead of being concentrated in a few individuals, expertise becomes embedded in workflows. Onboarding gets easier, support becomes more scalable, and engineers get their time back.

Luciano and Frane concluded: “Claude in the Loop” doesn’t replace developers, it just removes the parts of their jobs that they should not be doing in the first place. What’s left is a cleaner, more focused engineering experience, where humans and AI operate in a loop that actually makes sense.