Blog

Explore a wide range of topics, get inspired by the latest trends, and learn how to create conversational experiences that make an impact on your bottom line.

Posted onJune 2, 2026.

Benefits of chatbots for business and customers: The complete guide.

Discover the real benefits of chatbots built on an enterprise platform: 24/7 omnichannel support, 60% cost reduction, CDP-powered personalization, and AI agent escalation. With customer results, industry examples, and a full FAQ.

Posted onJune 2, 2026.

Customer retention strategies 2025: 20+ ways to grow customer lifetime value.

Customer retention strategies that actually work. 21 tactics covering onboarding, personalization, loyalty programs, win-back campaigns, and churn prevention.

Posted onJune 1, 2026.

Gibberlink: AI’s secret language explained (2026 update).

Learn how Gibberlink works, where it fits in the 2026 AI agent protocol stack, and what it means for enterprises building agentic workflows.

Posted onJune 1, 2026.

eSIM vs SIM card: Differences, pros and cons.

What is an eSIM, how is it different than a standard SIM, and what are the pros and cons? Learn this and much more!

Posted onMay 29, 2026.

Infinum: Why communication belongs in the blueprint, not the backlog.

Banks have been digitizing for 50 years. But according to Infinum, most are still making the same mistake: building trust and communication as an afterthought.

Posted onMay 29, 2026.

Most popular messaging apps by country.

We have compiled and analyzed data from multiple sources to formulate what we believe is the most definitive and accurate list of global penetration rates for the most popular messaging apps.

Posted onMay 28, 2026.

McDonald’s Brazil: Beyond the golden arches into a new era of CX innovation.

Golden arches. Smarter conversations. Find out how McDonald's Brazil is using WhatsApp Business and chatbot automation to turn every order into a personalized experience.

Posted onMay 27, 2026.

How to improve airline disruption management and crisis communication .

Airlines are facing constant disruptions. Learn how communication platforms enable effective airline crisis communication and disruption management with real-time passenger updates.

Posted onMay 26, 2026.

No-code chatbot builder: How to build AI chatbots without coding.

Build AI chatbots without writing a single line of code. Learn what no-code chatbot builders can do, which features matter for enterprise teams, and how platforms like AgentOS let you deploy across 15+ channels from a single visual builder.

Posted onMay 26, 2026.

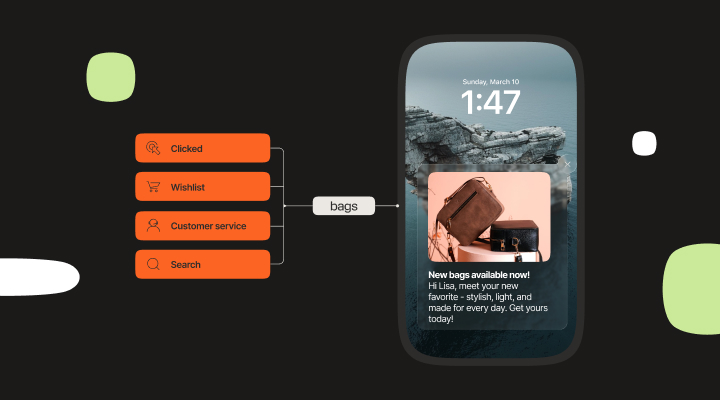

Benefits of a customer data platform, and why conversational CDPs deliver more.

Learn the top benefits of a customer data platform and why conversational CDPs deliver more through native messaging activation and AI agent integration.

Posted onMay 22, 2026.

What is a customer data platform and why you need one?.

A CDP creates a single database that you can leverage to boost your CX. Read to know what a customer data platform is and how you can use it.

Posted onMay 21, 2026.

B2B customer data platform: What it is and why B2B needs a different kind of CDP.

A B2B customer data platform unifies account-level data across every touchpoint, from CRM and messaging to AI conversations. Learn what makes B2B CDPs different, what features matter, and how Infobip's Conversational CDP within AgentOS adds conversational data no other CDP captures.

Posted on{{date_formatted}}.

{{title}}.

{{excerpt}}

Tags:- {{term.name}}.