Blog

Explore a wide range of topics, get inspired by the latest trends, and learn how to create conversational experiences that make an impact on your bottom line.

Results for

results found

Google RCS vs. Apple Messages for Business

Get an in-depth look at Google RCS vs. Apple Messages for Business and how brands can elevate their customer experiences with each channel.

Apple supports RCS on iOS 18

Apple is supporting RCS on iOS devices. Find out what it means for the future of messaging.

6 CPaaS trends shaping the future of customer communication in 2024 and beyond

The communications platform as a service (CPaaS) market is rapidly growing. But how is the market evolving, and what are the latest trends? Here, we provide a snapshot of the 2024 Gartner® Magic Quadrant™ for Communications Platform as a Service, together with some key takeaways from Infobip.

Conversational AI vs. Generative AI: What’s the difference?

Get an in-depth look at the difference between conversational AI vs. generative AI and how they can work together to help you elevate customer experiences.

23 of the best free AI courses you can start today

Level up your AI and machine learning knowledge in 2024 with our curated list of the best free courses and resources available on the web.

How RCS makes texting better in the US

Explore how RCS is the next big messaging evolution in the US, combining SMS, MMS, and iMessage into one powerful channel and reshaping how businesses communicate with their customers.

Omnichannel customer service: The key to unlocking a 5-star experience

We look at best practices for delivering an omnichannel customer support and service experience for customers who are used to having the power of choice.

Overhyped or underrated? Assessing the true impact of generative AI

As the buzz around GenAI starts to fizzle, has it lived up to our high expectations, and what does the future look like for GenAI?

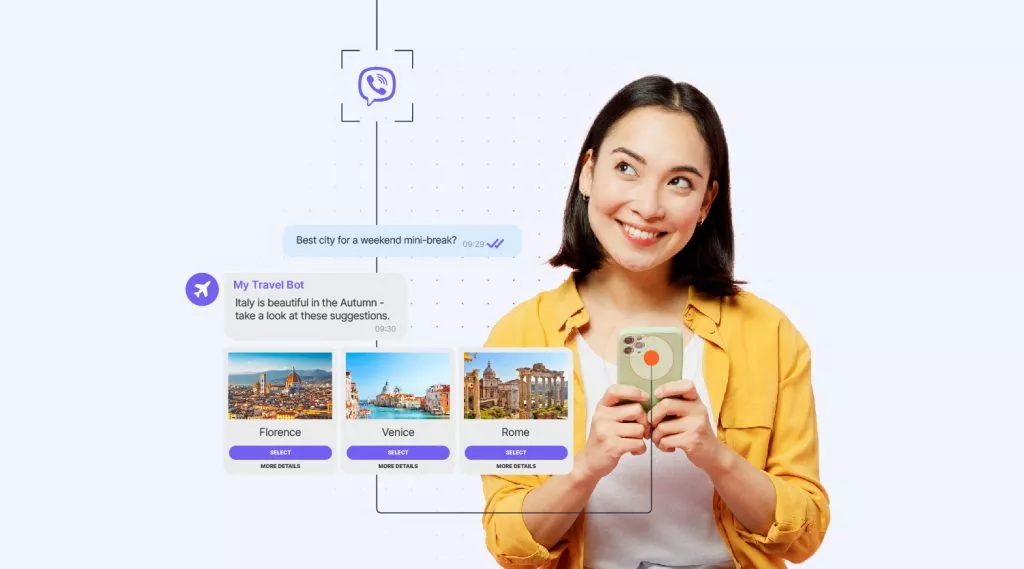

A quick guide to Viber chatbots and how to build one

Join us for an introduction to Viber chatbots, including key features, how they differ from Viber Business Messages, and how to build a Viber chatbot without needing to be a developer.

FCMB Nigeria: Driving growth and loyalty through conversational banking

Find out how FCMB Nigeria is embracing conversational banking to drive growth and improve access to financial services, customer satisfaction, and business efficiency.

A guide to building chatbots for Instagram

How to build effective chatbots for Instagram to capture attention, generate sales, and engage with users of the app favored by celebrities and mega-influencers.

10DLC vs short code vs toll-free: How to choose the right number

Learn the key differences between 10DLC, short code, and toll-free numbers for SMS campaigns and discover which option is best for you.

14 ways chatbots can elevate the healthcare experience

Explore 14 ways to improve patient interactions and speed up time to resolution with a reliable AI chatbot.

The future of conversational messaging with RCS: A look ahead with BT

Dive into the world of RCS and conversational messaging in this exclusive interview with BT. Learn how our partnership is shaping the future of customer engagement.

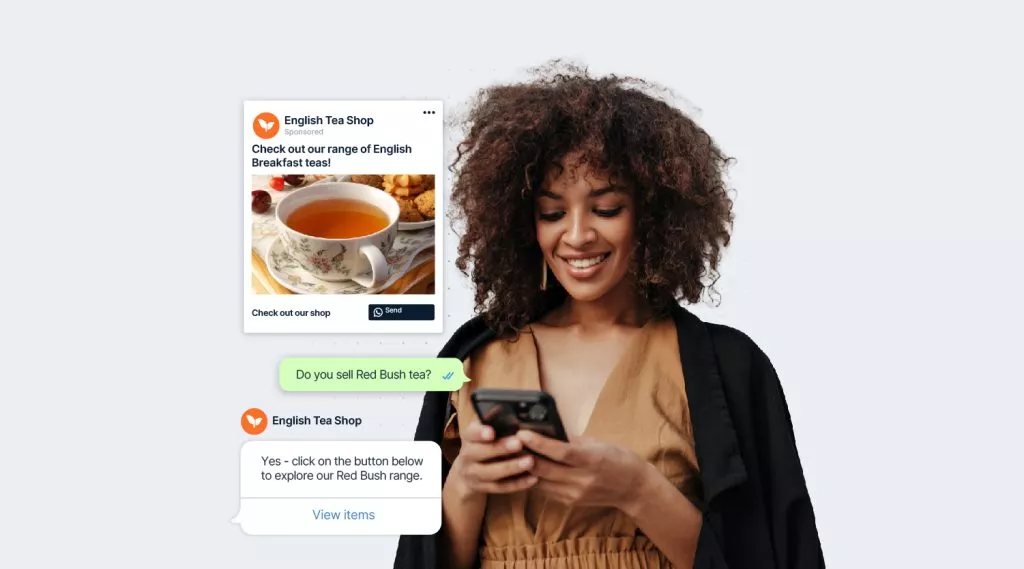

WhatsApp marketing: How to build the perfect strategy

How to craft an effective WhatsApp marketing strategy that incorporates the apps latest features and tools designed especially for business.

RCS Business Messaging for secure banking

Deep dive into the world of banking via RCS Business Messaging and learn about the benefits, use cases, and how to get started.

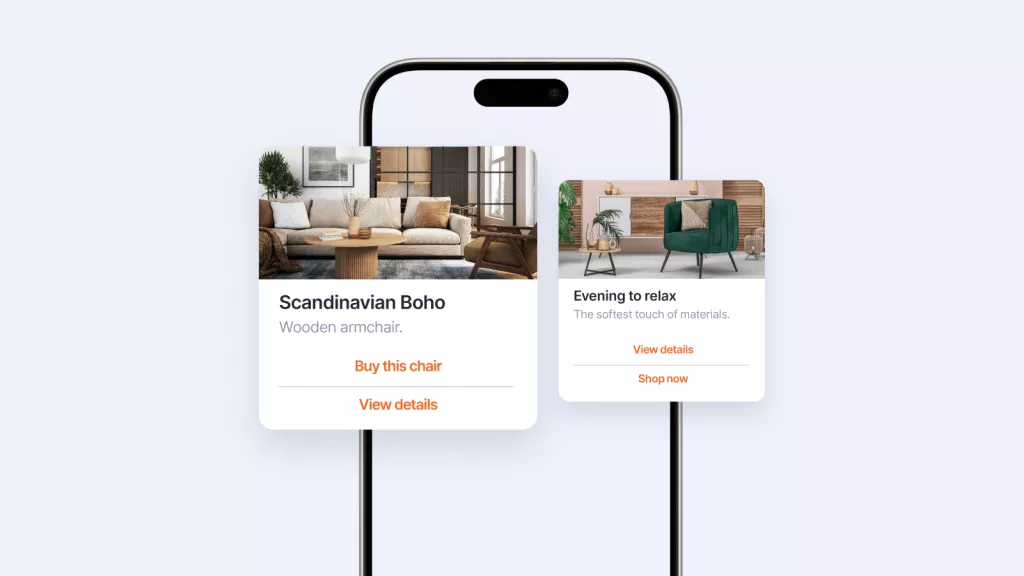

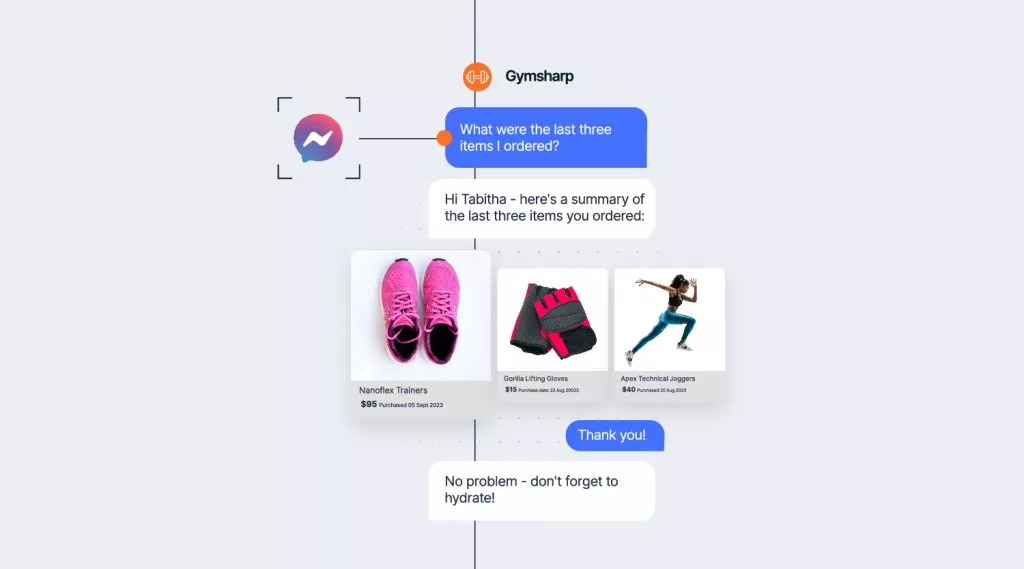

How to build a Facebook Messenger chatbot

Everything you need to know about creating a chatbot for Facebook Messenger, including the unique Messenger benefits you can tap into, and step-by-step instructions on how to build your own chatbot with no coding knowledge required.

Finding the right CPaaS vendor using the Gartner® Magic Quadrant™ report

A practical guide to using the Gartner Magic Quadrant report for CPaaS to help you choose the right cloud communication provider for your business.

Unlock the power of network APIs: How CAMARA transforms Telco innovation

Dive into the world of open network APIs with CAMARA and see how this game-changing project is driving innovation for Telcos.

e& enterprise: Empowering businesses into a digital future with emerging technologies

e& enterprise is leading the way in helping businesses transition into a digital future with emerging technologies. Find out how our partnership can empower your organization to drive innovation.

10DLC registration: A step-by-step guide for A2P messaging

Learn how to register a 10DLC number and campaign with Infobip to improve deliverability for SMS and MMS messages.

Promotional vs. transactional SMS – What is the difference?

Promotional SMS is used for marketing and sales, while transactional SMS is used to provide information. Learn more about the differences and how to leverage these two types of SMS for your business.

Virtual travel assistants: Redefining the travel experience in the age of AI

Dive into the transformative potential of virtual assistants in the travel industry, including the use cases that they are ideal for, and how to create your own.

WhatsApp for eCommerce: 22 use cases and examples

Discover 22 ways to use WhatsApp for eCommerce brands and offer customers better journeys and end-to-end experiences.

Automated conversations at scale with RCS and Vertex AI

Find out how building chatbots for the RCS channel that are powered by Google’s Vertex AI can deliver some exceptional use cases.

SMS character limits & message length: What you should know

Standard SMS messages are 160 characters long. Exceeding this limit can lead to extra costs and delivery problems, affecting customer experience.

AI Forecast: Industries we can expect to transform by 2030

Artificial intelligence and machine learning are disrupting existing markets. But it’s not only technology-driven industries that are affected. Find out how we can expect knowledge work to transform in the next five years, too.