Enterprise AI chatbots in 2026: What’s changed, what matters, and how to choose

Learn what an enterprise AI chatbot is, how it differs from an AI agent, and what to look for in an enterprise-ready platform in 2026.

With its great transformation in recent years, a new problem has emerged in the enterprise chatbot market: there’s no shared definition of what an enterprise AI chatbot is.

Is it a chatbot? An AI agent? A conversational AI platform? The already complex vocabulary has shifted faster than most vendor websites, and the platform decision shapes how far automation can scale. A platform built for FAQ automation won’t handle autonomous multi-step reasoning, and on the other hand, a platform built for AI agents may have no structured flow management. Then a platform that promises both may deliver neither in its entirety.

In this guide, you’ll learn what separates a genuine enterprise AI chatbot from one built with pre-defined flows, how enterprise chatbots and AI agents relate to each other, and what is the best option or combination for you. Then we’ll cover what to look for in a platform when the stakes are enterprise-grade, and where the real ROI evidence sits across retail, finance, support, and automotive.

Before comparing platforms or features, it helps to be precise about what an enterprise AI chatbot is, and what it isn’t.

What is an enterprise AI chatbot (and what it isn’t)

An enterprise AI chatbot is an AI-native conversational platform designed for large organizations to automate customer and employee interactions at scale. It includes enterprise-grade security, compliance, deep integrations, and omnichannel reach built in from the start.

That definition does a lot of work. Here’s what each part means in practice.

Not a rule-based bot. Basic chatbots follow scripts. Enterprise AI chatbots use natural language processing (NLP), natural language understanding (NLU), and generative AI to detect intent, handle variation, and personalize responses, even when customers don’t phrase things in a typical, clear way.

Not an SMB tool with an enterprise price tag. SMB chatbots optimize for quick setup and low cost. Enterprise AI chatbots are built for scale, compliance, and integration depth, connecting to Salesforce, SAP, ServiceNow, and your internal systems, while meeting SOC 2 Type II, ISO 27001, and GDPR requirements, without a custom security review for every deployment.

Not a single-channel solution. A chatbot that lives only on your website is a website widget. An enterprise AI chatbot operates natively across WhatsApp, SMS, RCS, Messenger, Instagram, Live Chat, voice, and more, with unified conversation management and cross-channel context that follows the customer, not the channel.

Not a standalone product. At enterprise scale, a chatbot that exists outside your customer data, contact center, and journey orchestration is a liability. The most effective enterprise AI chatbots are modules within a larger platform, sharing data, context, and logic with every other part of the customer experience stack.

What enterprise AI chatbots are: infrastructure for automated, intelligent, personalized customer conversations at scale, across every channel your customers use, with the security and compliance controls your legal team can sign off on.

Once that baseline is clear, the next confusion point becomes unavoidable: how enterprise chatbots differ from AI agents, and why the distinction matters in real deployments.

Enterprise chatbot vs. AI agent: The distinction that matters in 2026

This is the question every serious enterprise buyer is asking right now, and most vendors are either conflating the two or avoiding the comparison entirely.

Here is the practical distinction:

AI agents go beyond the pre-defined rules and reason through multi-step tasks autonomously. They take actions across multiple systems and handle complexity that doesn’t fit a predefined flow. When a customer’s order is delayed, damaged, and needs a replacement shipped to a new address with a discount applied, that’s a task for an AI agent, not a chatbot flow.

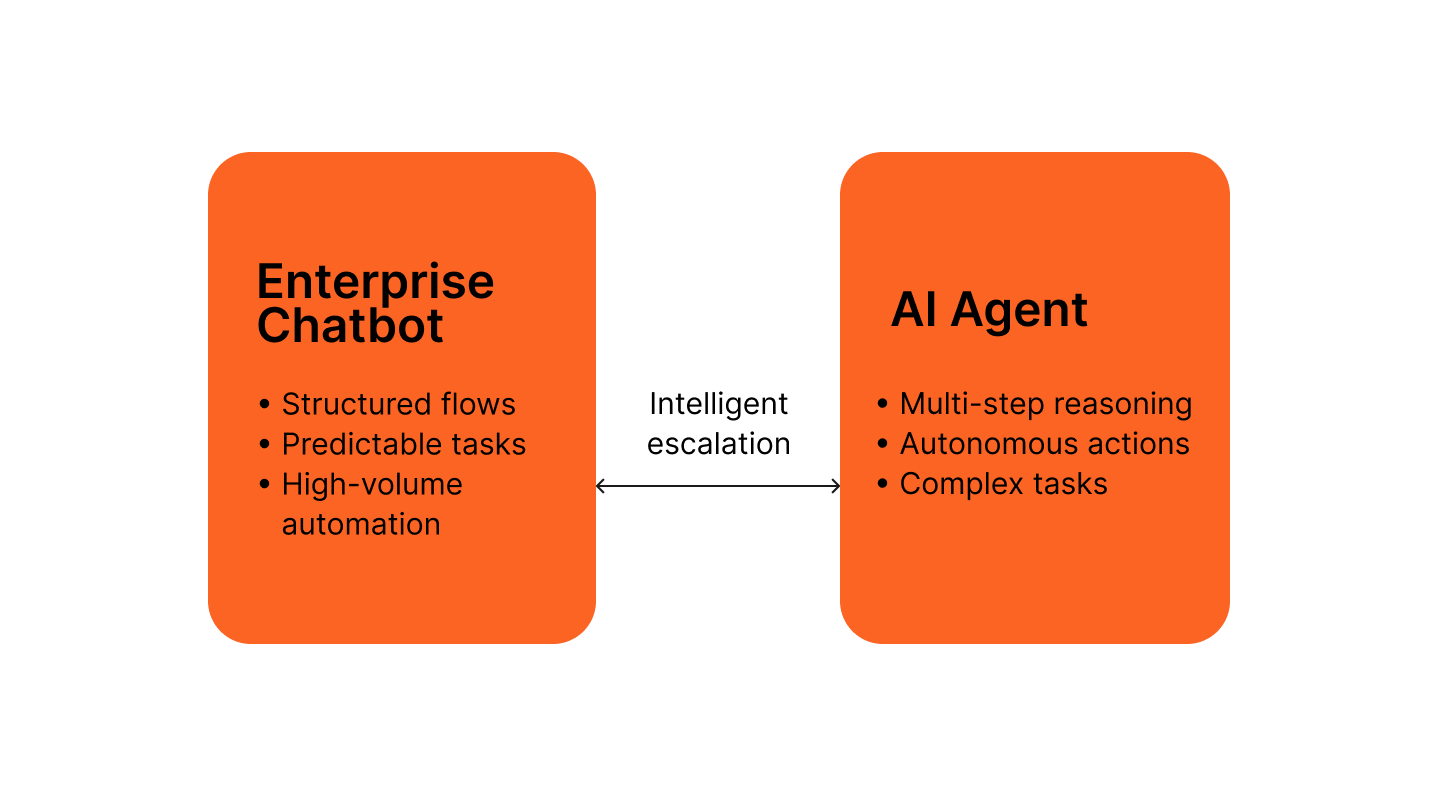

The mistake most buyers make is treating this as an either/or choice. It isn’t.

- Structured flows handle high-volume, predictable interactions efficiently.

- Autonomous AI agents handle complex, ambiguous cases.

The platforms that deliver real enterprise ROI are the ones that offer both, with intelligent escalation between them so neither capability is wasted.

This is where architecture starts to matter more than feature lists. A chatbot builder bolted onto an AI agent product is not the same as a platform where both are native modules sharing the same data layer, the same channel infrastructure, and the same escalation logic.

Infobip’s AgentOS is built on the principle that AI chatbots and AI agents are not separate products. They are components of the same platform, with configurable escalation paths between them and a shared Conversational CDP layer that gives every interaction (chatbot or agent) access to the same unified customer profile. AgentOS Insights monitors your customer’s journey, showing what they want, where they struggle, and how to move towards conversion.

Understanding this division shifts the evaluation mindset from terminology to architecture, which is exactly where platform selection should begin.

What to look for in an enterprise AI chatbot platform

With that context, the criteria below focus on capabilities that determine whether a platform holds under enterprise scale and complexity.

Here are the six criteria that separate genuine enterprise platforms from scaled-up SMB tools.

GenAI and NLP depth: Beyond keyword matching

The baseline for enterprise NLP in 2026 is intent detection that handles variation, context, and ambiguity, not keyword matching dressed up as AI.

Look for:

- LLM flexibility: Can you connect your preferred large language model, or are you locked into one vendor’s model? Enterprise needs change. Model lock-in is a long-term risk.

- Retrieval-augmented generation (RAG): RAG grounds LLM responses in your actual knowledge base, reducing hallucination and keeping answers accurate and on-brand. Without it, GenAI chatbots invent answers.

- Conversation memory: Does the platform retain context across a multi-turn conversation, or does it treat each message as a new interaction? Context loss is one of the most common failure points in enterprise chatbot deployments.

- Guardrails: Can you define what the chatbot will and won’t do? For regulated industries such as financial services, healthcare, and insurance, guardrails are non-negotiable, not a premium add-on.

- Model routing: Advanced platforms route queries to different models based on complexity and cost. Simple intents go to fast, cheap models. Complex reasoning goes to more capable ones. This keeps both quality and cost in check.

The bar has moved significantly past basic NLP. The fact that a vendor uses GPT isn’t a major differentiator, it’s baseline in 2026.

Even the strongest AI falls short if it can’t operate consistently across the channels your customers already use.

Omnichannel that means omnichannel

Most enterprise chatbot platforms support three to seven channels. They call this omnichannel, but it isn’t.

True omnichannel at enterprise scale means:

- Native deployment across 15+ channels, which includes WhatsApp, SMS, RCS, Apple Messages for Business, Facebook Messenger, Instagram, Viber, Telegram, LINE, Live Chat, Zalo, KakaoTalk, email, voice, and in-app messaging, without third-party middleware or channel-specific rebuilds.

- One set of bot logic, many channels. The same chatbot flow should deploy across multiple channels and senders simultaneously. If adding a new channel requires rebuilding the bot, that’s a channel adapter, not omnichannel.

- Cross-channel context. If a customer starts a conversation on WhatsApp and continues via Live Chat, the platform should carry context across both. Most platforms don’t.

- Unified conversation management. Every channel surfaces on the same platform, with the same routing rules, the same escalation logic, and the same reporting layer.

Channel breadth matters more than most buyers expect at the evaluation stage. The channels your customers prefer three years from now may not be the ones they prefer today, and rebuilding your chatbot logic for each new channel is expensive.

Channel coverage solves reach, but relevance depends on whether the chatbot understands who it’s talking to.

Customer data integration: The missing layer

This is the gap that separates the top-tier enterprise AI chatbot platforms from everyone else, and it’s almost never discussed in vendor comparison articles.

A chatbot that doesn’t know who it’s talking to is starting every conversation at zero. It asks for information the customer already gave you. It offers generic responses when personalized ones are possible. It misses upsell moments because it has no behavioral context. It creates friction instead of removing it.

The answer is a Conversational CDP, a unified customer profile that’s accessible in real time during every chatbot conversation. When a customer contacts you, the chatbot already knows their purchase history, their open tickets, their loyalty tier, their last interaction channel, and any behavioral signals from your app or website.

For seamless operation, this feature can’t be added later. It should be part of the initial architectural design. A chatbot that pulls from a separate CDP via API will always be slower and less reliable than one where the CDP is a native layer of the same platform.

Look for platforms where customer data integration is not an integration, it’s infrastructure. Personalization only works if teams can build and maintain it without becoming a bottleneck.

Build modes for every team

Enterprise chatbot deployments can involve multiple teams with different technical capabilities, depending on the requirements. CX teams need to iterate quickly without engineering support. Developers need to build complex logic without fighting a no-code interface designed for simpler use cases. The platform should accommodate both without forcing a choice.

The three build modes that matter at enterprise scale:

- No-code: Drag-and-drop visual flow builder, pre-built templates, point-and-click intent configuration. CX and operations teams can build and deploy sophisticated chatbots without writing a line of code.

- Low-code: JavaScript coding elements within the visual builder for teams that need conditional logic, custom API calls, or dynamic content without full developer engagement.

- Pro-code: Full Python support with frameworks like LangGraph and AutoGen for development teams building complex agentic workflows, custom integrations, or proprietary AI models into the chatbot layer.

Of course, flexibility means nothing if the platform can’t meet enterprise security and compliance standards.

Security, compliance, and infrastructure

For enterprise buyers in regulated industries, this section determines shortlist eligibility before any other criteria.

The baseline compliance checklist:

- SOC 2 Type II

- ISO 27001

- GDPR (with data residency options by region)

- AES-256 encryption for data in transit and at rest

- Differential privacy controls

- Role-based access control and audit logging

Beyond certifications, infrastructure matters:

- Uptime SLA: 99.95% is the enterprise standard. Anything lower is a risk conversation with your operations team.

- Global carrier infrastructure: For chatbots that operate on SMS, RCS, or voice channels, carrier connections determine delivery reliability. Infobip has 850+ carrier connections across 190+ countries.

- Data center distribution: Infobip also has 40+ data centers globally, with regional hosting options for organizations with data sovereignty requirements.

Always verify exact certifications directly with the vendor’s compliance team before finalizing procurement. Regulatory landscapes change, and published certification lists can lag behind current status.

Even with the right foundations in place, no chatbot resolves every conversation, which makes escalation design critical.

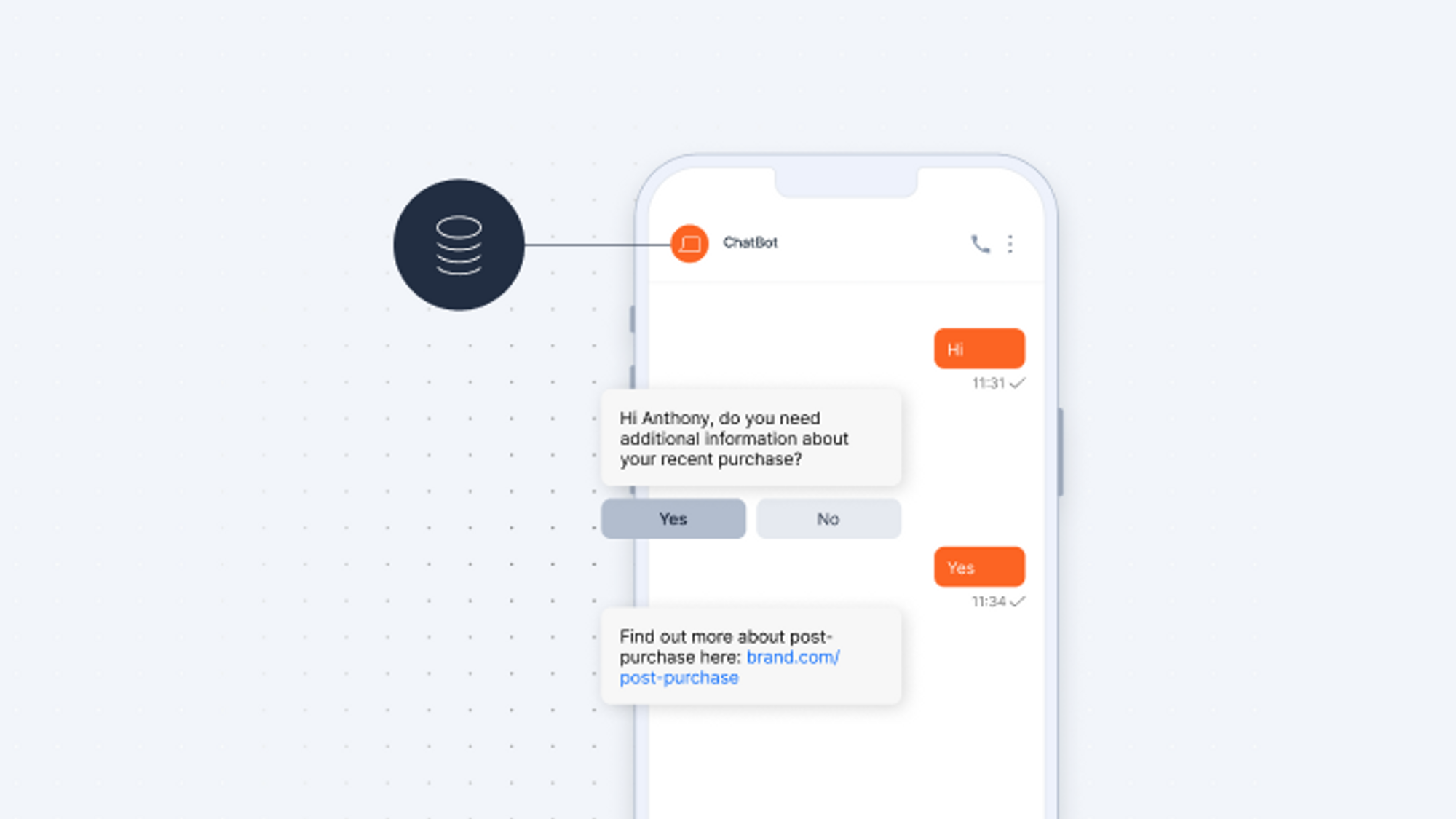

Escalation paths: What happens when the bot can’t answer

This is where most enterprise chatbot deployments break down, not in the chatbot’s ability to handle easy queries, but in what happens when a query exceeds its capability.

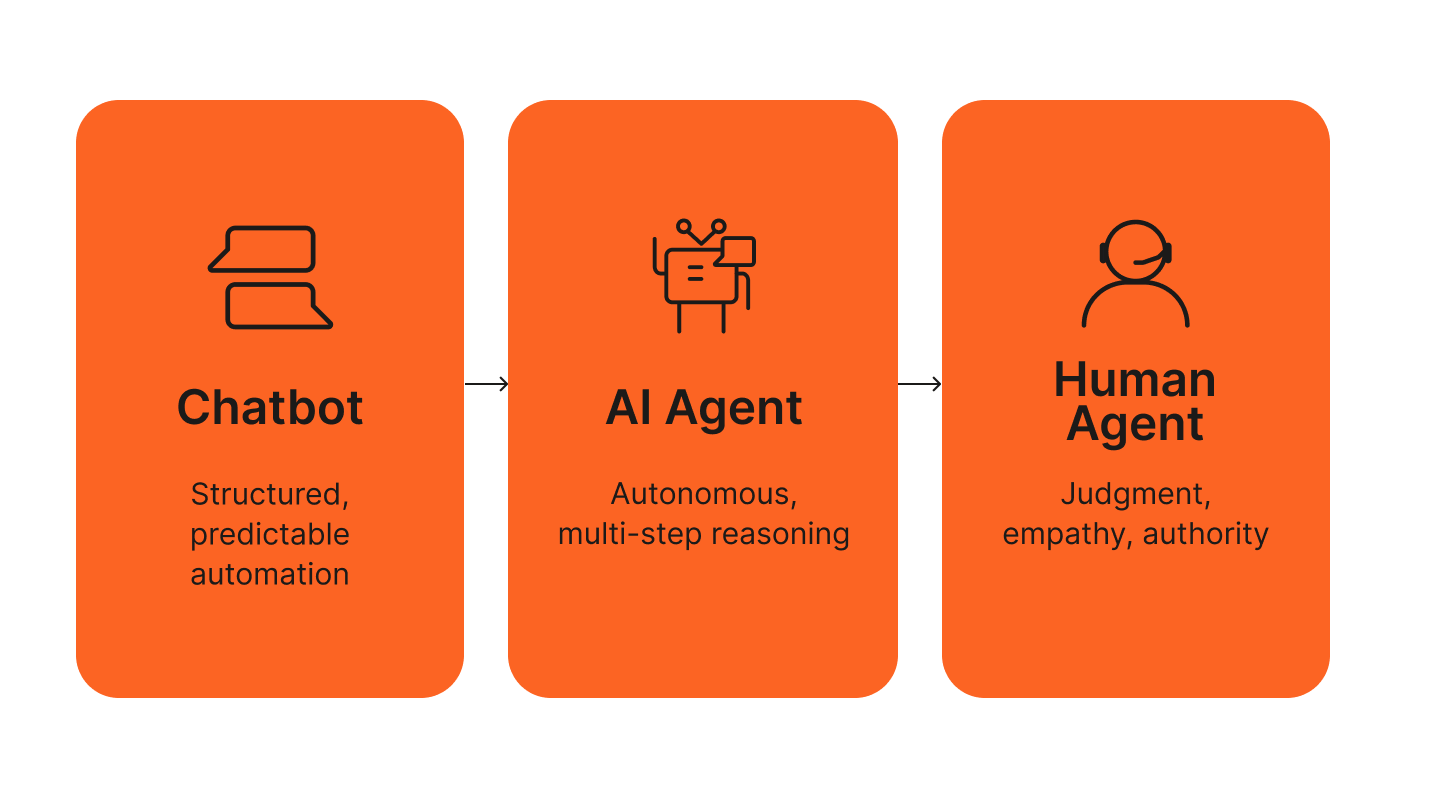

The architecture that works at enterprise scale is a three-tier escalation path:

- AI chatbot handles structured, high-volume interactions, which is the majority of customer queries.

- AI agent handles complex, multi-step reasoning tasks that exceed structured flow logic but don’t yet require human judgment.

- Human agents handle escalations that require empathy, authority, or genuinely novel situations.

The critical requirement is context continuity at every handoff. When a chatbot escalates to an AI agent, the agent has full conversation history. When the AI agent escalates to a human, the human agent sees the full transcript, the customer’s CDP profile, and every action taken in the session.

Escalation without context is just a transfer. Context-complete escalation is what customers actually experience as good service.

Evaluate this by asking vendors to walk you through a realistic complex escalation scenario. The handoff quality usually reveals the platform’s architecture faster than any feature list.

These architectural choices only matter if they translate into measurable business outcomes.

Enterprise AI chatbot use cases that truly drive ROI

The evaluation criterion matters. Real customer results matter more. Here’s where enterprise AI chatbots are delivering measurable impact across industries, with Infobip customer data.

Retail and eCommerce

Retail use cases for enterprise AI chatbots cluster around three high-value interactions: product discovery, order management, and promotional campaigns.

Ride-hailing platform Bolt deployed a WhatsApp chatbot for promotional campaigns and saw a 40% increase in conversion rate. The chatbot handled campaign qualification, voucher distribution, and follow-up, all automated and within a single WhatsApp thread.

Nissan Saudi Arabia used a WhatsApp chatbot for lead qualification and customer engagement, achieving an 80% session engagement rate. This metric reflects how well the conversation held attention, not only how many people opened it.

The pattern across retail deployments: chatbots that have product catalog access, order data, and customer history outperform generic flows by a significant margin. This comes back to the CDP integration point from the evaluation criteria section.

Financial services and insurance

Compliance requirements make financial services one of the harder verticals for AI chatbot deployment, and one of the highest-ROI when done correctly.

LAQO Insurance deployed an AI chatbot to handle policy inquiries and customer service interactions. 30% of queries are now resolved entirely by the AI chatbot, with no human agent involvement. For an insurance provider where agent time is both expensive and constrained, that containment rate has direct P&L impact.

The compliance angle: financial services chatbots need guardrails that prevent the chatbot from providing advice it isn’t authorized to give, audit logging for regulatory reporting, and data handling that meets financial sector requirements. These are not optional features, but deployment prerequisites.

Customer support and operations

24/7 availability and call deflection are the most common enterprise chatbot business cases, and the ROI evidence is consistent.

Farm Superstores deployed an AI chatbot for customer support operations and achieved a 60% reduction in operational costs. The chatbot handles the high-volume, repetitive query layer: product availability, store hours, order status, return process, freeing human agents for interactions that genuinely require them.

The most important metric in support deployments is the containment rate: the percentage of conversations the chatbot resolves without escalation. High containment rates reduce cost. Low containment rates create friction. The difference is usually in the quality of the AI, the depth of data integration, and the accuracy of escalation logic.

Automotive and motorsport

TGR Haas F1 used Infobip’s chatbot and messaging infrastructure for fan engagement and campaign automation, achieving an 80% lower cost of ad conversion and a 76% engaged user rate across campaigns.

The automotive sector more broadly uses enterprise AI chatbots for lead qualification: routing prospective buyers through model selection, financing questions, and test drive booking without requiring a dealership contact. The chatbot handles qualifications, then the human handles the close.

These results highlight what’s possible, but they depend heavily on how the platform is designed under the hood.

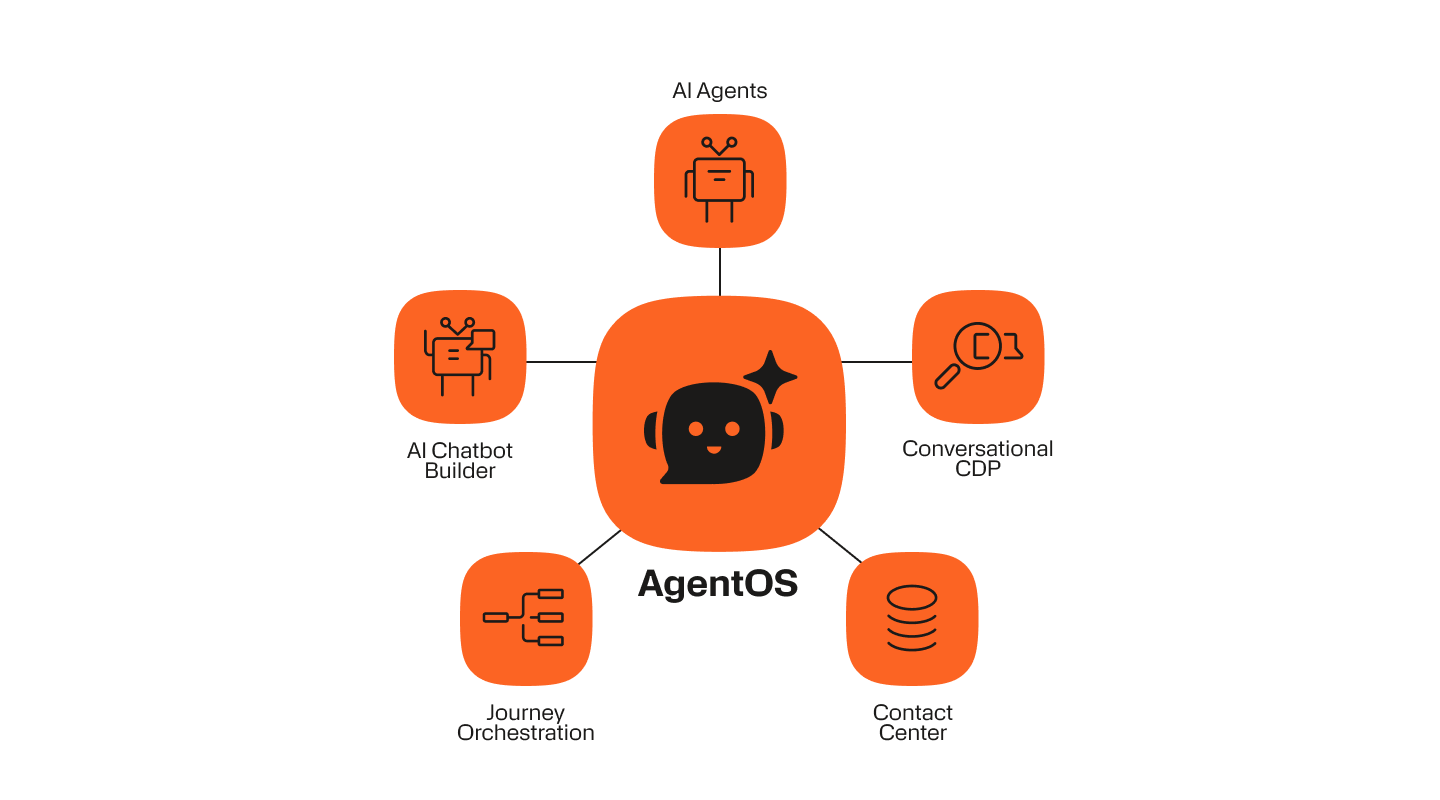

How Infobip’s AgentOS approaches enterprise chatbots differently

Now you’re up to date on enterprise AI chatbots in 2026, here’s what truly differentiates Infobip’s approach from the rest of the enterprise chatbot market.

A chatbot builder inside an agentic AI platform

Most enterprise chatbot vendors sell a chatbot product. Some have added AI agent capabilities as a separate module or a recent acquisition. The integration is visible if you look beyond the surface: separate contracts, separate APIs, separate data models, and escalation paths that require custom engineering to function reliably.

Infobip’s AgentOS is not a chatbot product. It is a unified platform: AI chatbot builder, AI agents, Cloud Contact Center, Conversational CDP, and journey orchestration, built as a single system with a single API and a single contract.

This matters operationally. When your chatbot escalates to an AI agent, there is no API handshake between two separate products. When your AI agent escalates to a human in the contact center, there is no data translation layer. The entire stack shares one data model, one conversation context, and one customer profile.

For enterprise IT and operations teams evaluating total cost of ownership, this architectural difference has significant implications for integration complexity, vendor management, and long-term platform maintenance.

The differences below focus on architecture and operating model, not surface-level features.

Customer familiarity before the first message

Infobip’s Conversational CDP is not a CRM integration. It’s a native layer of AgentOS, meaning every chatbot conversation starts with a unified customer profile already loaded: purchase history, open support tickets, loyalty status, behavioral signals from your app or website, last interaction channel, and any custom attributes your data team has built.

The practical impact: chatbots that know the customer before the first message don’t ask redundant questions. They personalize from the first response. They surface relevant offers based on behavioral signals. They route more accurately because they have context that rule-based routing can’t use.

This is the capability that turns a chatbot from a cost-reduction tool into a revenue-generation tool. Personalization at the chatbot layer, powered by real customer data, is where the highest-ROI enterprise deployments are being built.

15+ channels, 850+ carrier connections, zero rebuilding

Infobip’s native channel infrastructure covers WhatsApp, SMS, RCS, Apple Messages for Business, Facebook Messenger, Instagram, Viber, Telegram, LINE, Live Chat, Zalo, KakaoTalk, email, voice, and in-app messaging, all from one builder, one set of bot logic, deployed simultaneously across multiple channels and senders.

The carrier infrastructure behind this: 850+ carrier connections, 190+ countries, 40+ data centers, 99.95% uptime SLA, is not middleware. It’s Infobip’s own infrastructure, built over two decades as a CPaaS provider. That infrastructure is what makes carrier-grade delivery reliability a realistic promise rather than a marketing claim.

For enterprise buyers evaluating channel expansion risk: adding a new channel on Infobip does not require rebuilding bot logic. The same flow deploys to the new channel. The same reporting covers it. The same escalation rules apply.

From no-code to pro-code in one platform

Infobip’s AI chatbot builder supports all three build modes from one interface:

- No-code: Visual drag-and-drop flow designer with pre-built templates, a real-time conversation simulator for testing before deployment, and GenAI intent detection that non-technical teams can configure without training data setup.

- Low-code: JavaScript coding elements embedded within the visual builder for conditional logic, dynamic content, and custom API calls, without requiring a full development sprint.

- Pro-code: Python support with LangGraph and AutoGen integration for development teams building agentic workflows, custom model integrations, or proprietary business logic at the chatbot layer.

All three modes produce the same enterprise-grade chatbot, operating on the same platform infrastructure, with the same security and compliance controls applied uniformly.

Once the platform decision is made, the remaining challenge is sequencing: where to start and how to scale safely.

How to get started with an enterprise AI chatbot

The fastest path to enterprise chatbot deployment is templates, not because templates are the best long-term solution, but because they reduce time-to-value while your team learns about the platform.

Infobip’s pre-built templates cover the most common enterprise use cases: FAQ handling, lead qualification, order tracking, appointment booking, support ticket creation, and escalation flows. A basic FAQ or support chatbot can be live within hours using these templates and the no-code builder.

For more complex deployments such as multilingual flows across multiple channels, custom CRM integrations, agentic escalation paths, or industry-specific compliance requirements, Infobip’s professional services team can scope, design, and deploy enterprise-grade chatbots on your behalf. Timeline for complex builds typically runs days to weeks depending on scope, integration depth, and the number of channels.

Before any deployment goes live, use the real-time conversation simulator to test flows end-to-end, including edge cases, escalation triggers, and multilingual handling. Most deployment failures come from insufficient testing of the 20% of conversations that don’t follow the expected path.

A practical decision framework for where to start:

- Identify your highest-volume, most repetitive customer interaction. This is your first chatbot use case, the one with the fastest ROI and the lowest risk.

- Map the escalation path before you build the flow. Know exactly when and how the chatbot should escalate to an AI agent or human agent before writing a single flow node.

- Decide on your build mode based on team capability, not aspiration. Start no-code if your CX team will be responsible for maintaining and updating the chatbot after launch. Involve developers early if the use case requires complex integrations.

- Connect your customer data before launch. A chatbot deployed without CDP integration will underperform compared to one that has it from day one.

- Set containment rate as your primary success metric. Everything else, CSAT, AHT, conversion rate, follows from getting containment right.

Talk to an enterprise chatbot expert

If you’re evaluating enterprise AI chatbot platforms, the criteria in this guide give you a framework for separating genuine enterprise capability from scaled-up SMB tools. The next step is to find out how those criteria apply to your specific use case, channels, and customer data environment.

You’ve got the framework. Now see it in practice.