Blog

Explore a wide range of topics, get inspired by the latest trends, and learn how to create conversational experiences that make an impact on your bottom line.

Posted onApril 8, 2026.

Conversational AI use cases in 2026: Real-world examples and results by industry.

Discover diverse conversational AI use cases transforming industries. Learn about key applications, success stories, and future AI trends.

Tags:- Blog.

- Best Practices.

Posted onApril 8, 2026.

WhatsApp for government services: The future of citizen engagement .

Learn how WhatsApp helps governments modernize citizen services with secure messaging, automation, and real-time, two-way communication at scale.

Tags:- Blog.

- Blog.

- Best Practices.

- WhatsApp Business.

- Government.

Posted onApril 7, 2026.

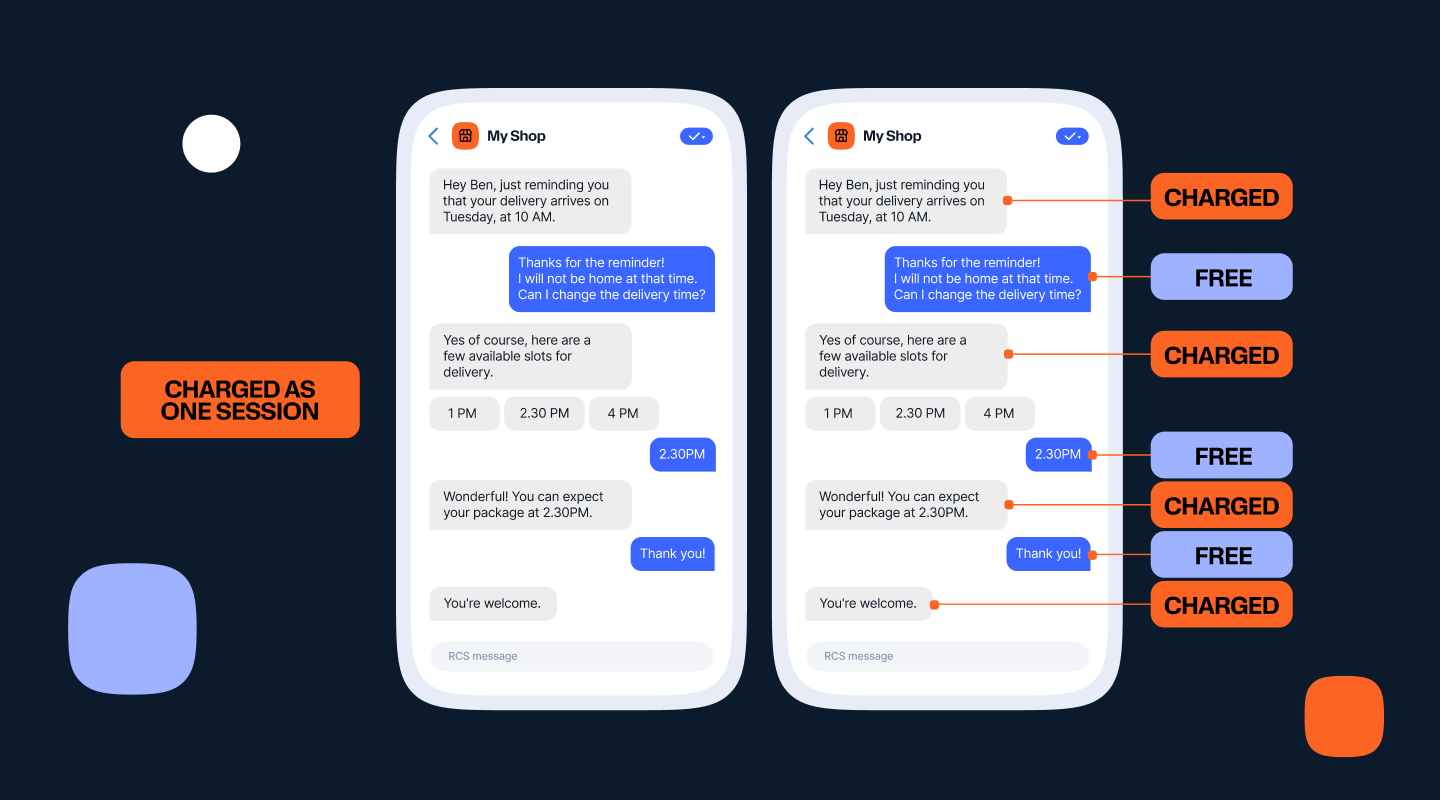

What is conversational billing for RCS? Explained for US businesses.

Conversational billing is coming to the US. Here's what it means, how RCS Interactive Sessions work, and why the shift from per-message to per-conversation pricing changes everything for CX.

Tags:- Blog.

- Awareness.

- RCS.

Posted onApril 7, 2026.

Zalo: Supercharging conversational commerce with localization and AI .

In APAC, brand communication is evolving from one-way broadcasts to two-way conversations, driven by mobile-first, localized customer expectations. Discover how Infobip and Zalo help brands turn messaging into seamless, high-converting customer journeys.

Tags:- Blog.

- CX.

- Trends and Insights.

- Zalo.

- Spotlight.

Posted onMarch 30, 2026.

How to design and build a WhatsApp chatbot – with examples.

Learn how to build a WhatsApp chatbot step by step, from API setup to AI agents. Includes real business examples, pricing, and platform tips.

Tags:- Customer Service.

- Best Practices.

- WhatsApp Business.

Posted onMarch 27, 2026.

What is Conversational AI? Definition, How It Works, and 2026 Trends.

Get a clear conversational AI definition, learn how it works with NLP and machine learning, see real examples, and discover how conversational AI agents are reshaping CX in 2026.

Tags:- Blog.

- Conversational experience.

- Best Practices.

Posted onMarch 25, 2026.

On-premise vs cloud contact center: Key differences and how to switch .

Compare on-premise and cloud contact centers side by side. Learn key differences in cost, scalability, AI capabilities, and security — plus a step-by-step migration guide.

Tags:- Blog.

- Best Practices.

Posted onMarch 24, 2026.

Best chatbot builders in 2026.

A complete guide on different types of chatbot builders available in 2026, including their features and use cases. Discover which chatbot builder is ideal for your business goals, and how you can use them.

Tags:- AI chatbots.

- customer service.

- CX.

- Trends and Insights.

Posted onMarch 20, 2026.

Political SMS compliance isn’t a constraint anymore, it’s your competitive edge.

Campaign Verify tokens are now mandatory across every sender type. Here is what that means for your 2026 election messaging strategy, and why the window to act is closing.

Tags:- Blog.

- Awareness.

Posted onMarch 19, 2026.

Conversational AI integration: B2B implementation guide (2026) .

This guide shows B2B teams how to integrate agentic conversational AI into their customer service stack, connect core systems, stay compliant, and prove ROI.

Tags:- Blog.

- AI chatbots.

- Awareness.

Posted onMarch 17, 2026.

WhatsApp news and updates (2026).

Every major WhatsApp feature launch from Q3 2025 through Q1 2026, explained clearly for businesses ready to put them to use.

Tags:- Conversational experience.

- Trends and Insights.

- WhatsApp Business.

Posted onMarch 16, 2026.

What is a confirmation email? Examples and templates.

The email everyone waits for after clicking “buy.” Here’s what confirmation emails are, why they matter, and how to write ones that keep customers confident and coming back.

Tags:- Best Practices.

- Email.

Posted on{{date_formatted}}.

{{title}}.

{{excerpt}}

Tags:- {{term.name}}.